Maml meta learning11/16/2023

In this formulation, meta-parameters are learned in the outer loop, while. Gradient (or optimization) based meta-learning has recently emerged as an effective approach for few-shot learning. We then develop a learning algorithm based on minimizing the error bound with respect to an empirical IPM, including a weighted MAML algorithm, α-MAML. A core capability of intelligent systems is the ability to quickly learn new tasks by drawing on prior experience.

In this general setting, we provide upper bounds on the distance of the weighted empirical risk of the source tasks and expected target risk in terms of an integral probability metric (IPM) and Rademacher complexity, which apply to a number of meta-learning settings including MAML and a weighted MAML variant. In this work, we provide a general framework for meta-learning based on weighting the loss of different source tasks, where the weights are allowed to depend on the target samples. A gradient-based approach to meta-learning, like MAML, puts us in familiar territory: using pre-trained models and fine-tuning them. from publication: Applying Few-Shot Learning in Classifying.

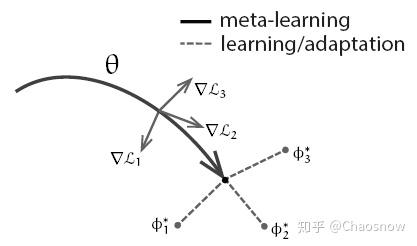

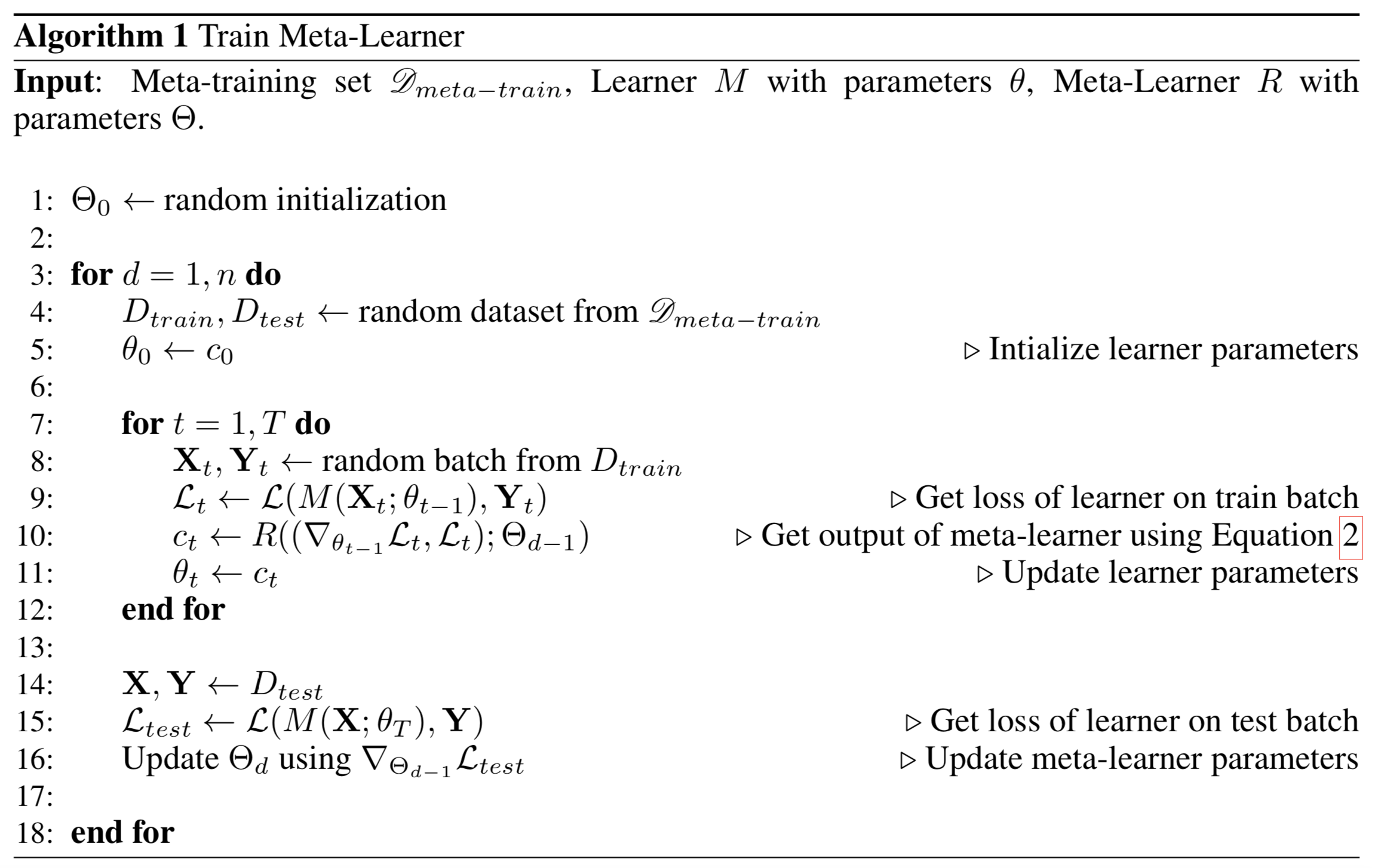

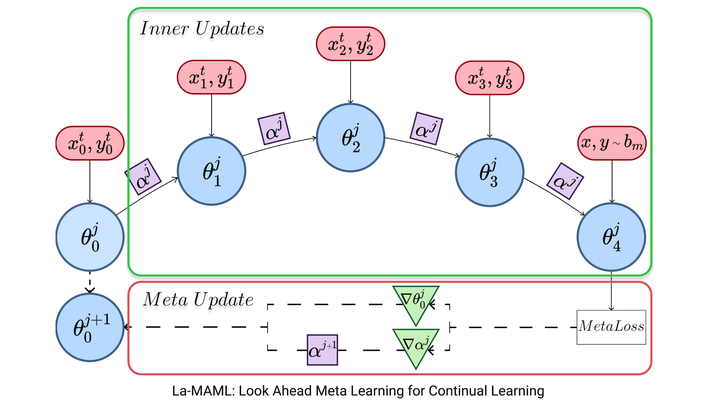

Its core idea is to leverage a set of aux-iliary tasks to search for a good parameter initial-ization from which learning a target task would re-quire only a handful of training samples. MAML, short for Model-Agnostic Meta-Learning, is a fairly general optimization algorithm, compatible with any model that learns through gradient descent. Download scientific diagram Illustration of Model-Agnostic Meta-Learning (MAML) algorithm. 3.2 Model-Agnostic Meta-Learning Model-Agnostic Meta-Learning (MAML) (Finn et al.,2017) is an optimization-based meta-learning framework. 53, it has been developed a lot in recent years, thus meta-initialization methods are more popular in intelligent fault diagnosis. Meta learning is a subfield of machine learning where automatic learning algorithms are applied to metadata about machine learning experiments. Since Model agnostic meta-learning (MAML) was proposed by Finn et al. In this conference paper, we explore the potential of using MAML AI model such that it requires few data samples to yield accurate results for new users’ EEG data. However, many popular meta-learning algorithms, such as model-agnostic meta-learning (MAML), only assume access to the target samples for fine-tuning. There are two mainstream methods in optimization-based meta-learning, meta-initialization and meta-optimizer. Model-Agnostic Meta-Learning (MAML) has gained significant attention in the machine learning community due to its ability to quickly adapt to new tasks using a small amount of data. Our proposed modulation of per-parameter learning rates in our meta-learning update allows us to draw connections to prior work on hypergradients and meta-descent. Further, the learner acts as initialization parameters for the adapter so that it can perform task-specific learning. In this work, we propose Look-ahead MAML (La-MAML), a fast optimisation-based meta-learning algorithm for online-continual learning, aided by a small episodic memory. In effect, the learner is being fine-tuned using a gradient-based approach for every new task in the batch of episodes. **MAML**, or **Model-Agnostic Meta-Learning**, is a model and task-agnostic algorithm for meta-learning that trains a model’s parameters such that a small number of gradient updates will lead to fast learning on a new task.Meta-learning leverages related source tasks to learn an initialization that can be quickly fine-tuned to a target task with limited labeled examples. The meta-loss indicates how well the model is performing on the task. The meta-training algorithm is divided into two parts: Firstly, for a given set of tasks, we sample multiple trajectories using and update the parameter using one (or multiple) gradient step(s) of the policy gradient objective.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed